The Early-Stage Reinforcement Learning Model Shaking Up Predictive Maintenance

Source PublicationSpringer Science and Business Media LLC

Primary AuthorsTondo

A preliminary study has successfully trained an artificial intelligence agent to decide precisely when factory equipment needs repairing, achieving a 96.6% recall rate on noisy industrial data. Translating theoretical machine health warnings into practical, cost-effective action has historically troubled engineers attempting to optimise predictive maintenance systems.

The Prediction Gap in Predictive Maintenance

Modern manufacturing relies heavily on keeping machines running without interruption. We have become incredibly adept at using deep learning to monitor equipment health and forecast potential breakdowns.

However, knowing a machine might fail is entirely different from knowing exactly when to shut it down for repairs. Act too early, and you waste money and halt production unnecessarily. Wait too long, and catastrophic failure damages the entire assembly line.

Moving Beyond Thresholds

The traditional method relies on rigid, threshold-driven strategies. Engineers set an arbitrary limit—such as a specific vibration frequency or temperature—and trigger a repair only when the machine crosses that line.

This static approach ignores the complex, shifting variables of a busy factory floor. It forces operators to guess the optimal balance between avoiding failures and maintaining production continuity.

Treating Repairs as a Sequential Game

In a recent preprint, researchers tested a radically different framework. They reformulated the decision-making process as a Markov Decision Process, essentially treating equipment health as a sequential game.

They trained a Deep Q-Network (DQN) agent using Deep Reinforcement Learning. Instead of reacting to a static threshold, the algorithm learns a dynamic policy by constantly weighing the rewards of continued production against the risks of a breakdown.

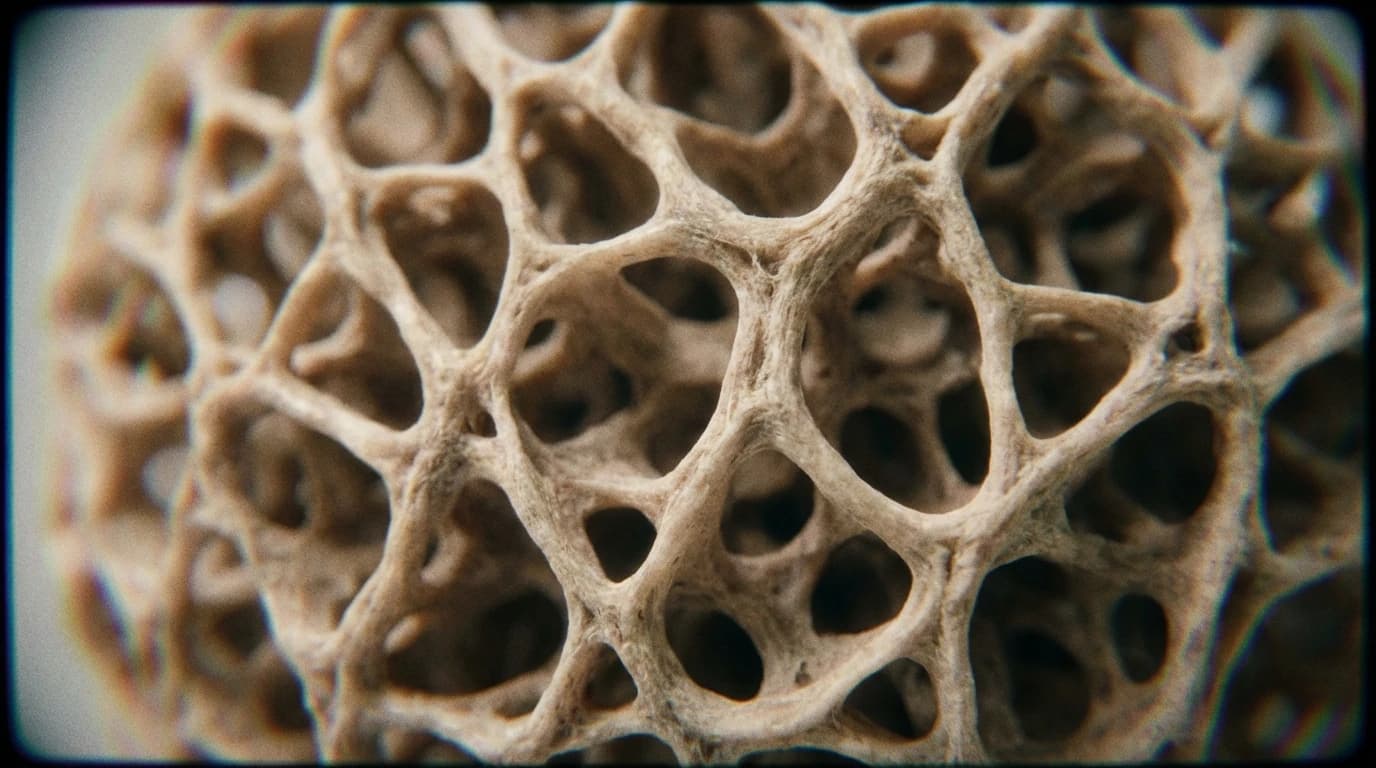

The researchers tested this model on a high-dimensional dataset of industrial water pumps. Rather than tracking isolated metrics, the agent models equipment health dynamics over time, learning a policy that balances the critical trade-off between avoiding catastrophic failure and maintaining production continuity.

Operating under noisy conditions, the agent successfully identified when to intervene with a 96.6% recall rate. This suggests that dynamic algorithms could serve as a highly effective decision-support layer.

What the Algorithm Misses

Because this research is currently an early-stage preprint awaiting peer review, we must view these high success rates with healthy scepticism. The study does not yet solve the problem of real-world deployment complexity.

Testing solely on a specific public dataset of industrial pumps cannot fully replicate the unpredictable physical constraints, supply chain delays, or sudden budget restrictions inherent to live manufacturing environments. The model measures theoretical intervention success, but it only suggests how a factory manager might actually integrate these automated decisions into a human-led workforce.

Complementary Systems

This approach does not entirely replace the old method. The authors note that policy-based reinforcement learning works best as a complement to conventional threshold-driven strategies.

If validated through peer review, this framework could standardise how factories organise their repair schedules. By bridging the gap between raw prediction and strategic action, manufacturing centres may finally stop guessing when to reach for the spanner.