How Deep Learning Posture Recognition Turns Your Skeleton Into Data

Source PublicationScientific Reports

Primary AuthorsShi, Yu, Li et al.

The New Digital Coach

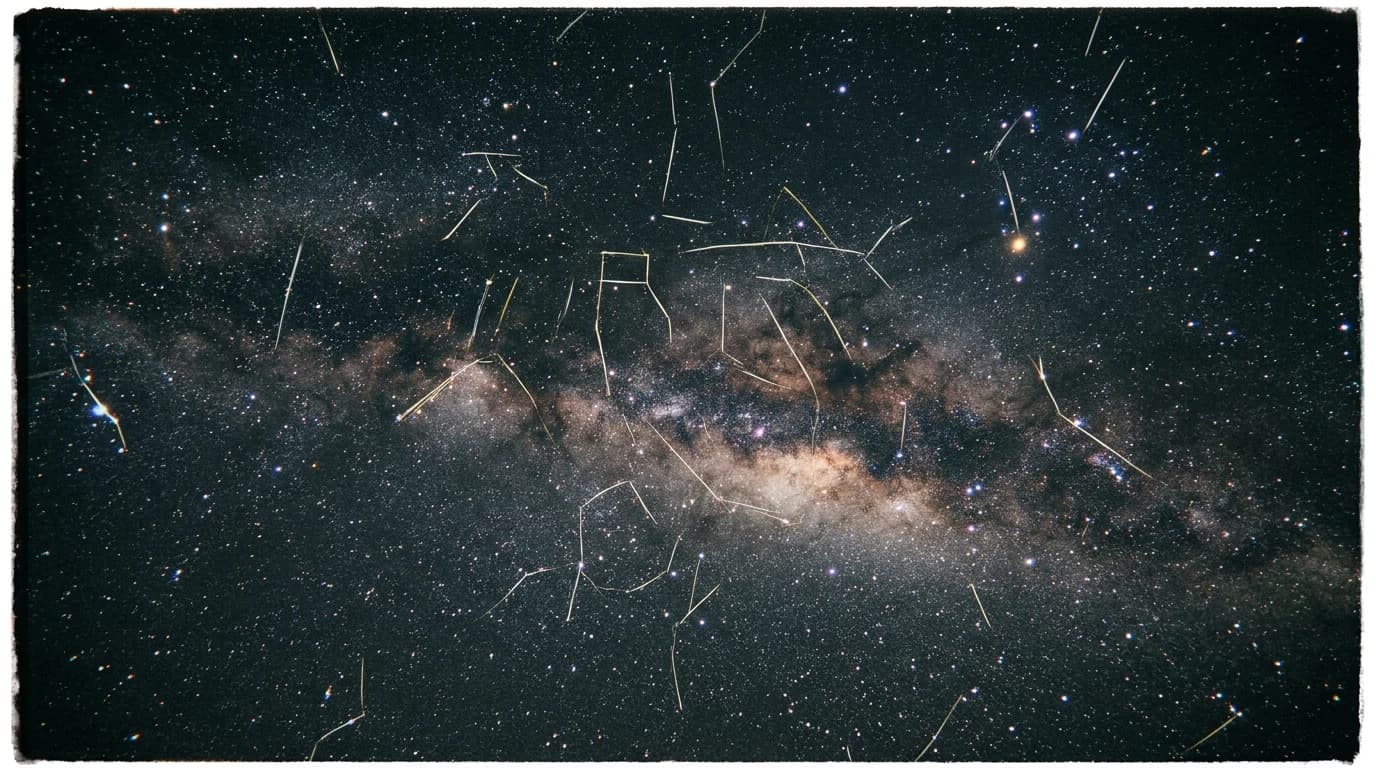

Imagine your body is a constellation of stars. A standard camera sees the dots, but it misses the invisible gravity pulling them together. Deep Learning Posture Recognition acts like a high-speed telescope that maps those hidden connections in real-time.

These results were observed under controlled laboratory conditions, so real-world performance may differ.

Coaches traditionally relied on manual observation or basic software. These tools often failed to capture the quick, complex shifts in a sprinter’s stride or a swimmer’s stroke. They saw the movement but lacked the maths to explain the underlying mechanics.

Advancing Deep Learning Posture Recognition

Researchers developed the Deep Dynamic Graph Attention Posture Recognition (DDGAPR) model. It treats the human skeleton as a dynamic graph, where joints are nodes and limbs are edges. By using 'attention' mechanisms, the AI learns which joints matter most during specific movements.

The model does not just watch; it calculates. It applies spatial and temporal filters to understand how a shoulder’s position at second one affects a wrist’s flick at second two. In this specific study, the model measured significant performance gains over older models:

- Motion recognition accuracy increased by 18%.

- Validation accuracy rose by 9.4%.

- True positive classifications improved by 9.1%.

- Average attention weight increased by 10%.

This tech suggests a future where athletes receive more nuanced, data-driven feedback. At this stage of development, the model demonstrates how AI can refine performance optimisation by understanding the subtle 'flow' of a movement. By turning raw video into a sophisticated map of joint interactions, the system could eventually help coaches tailor training sessions with unprecedented precision, moving sports development into a more intelligent, data-led era.