How AI is Fixing the Visual Illusions in Bladder Cancer MRI

Source PublicationEuropean Radiology

Primary AuthorsFan, Li, Chen et al.

The Hook

Imagine trying to spot a hidden water leak behind a wall just by looking at the damp wallpaper. If the damp patch forms a neat, raised bubble, you might easily guess how deep the water goes.

But if the damp is a wide, flat smudge, your eyes might play tricks on you. You might assume the rot goes much deeper into the bricks than it actually does.

Radiologists face a remarkably similar visual trick when reading a bladder cancer MRI. The shape of the tumour often fools the human eye, leading to a misjudgement of how deep the disease goes.

The Context of Bladder Cancer MRI

When a patient has a tumour in their bladder, doctors need to know if the abnormal cells have invaded the underlying muscle wall. This single detail dictates the entire treatment plan.

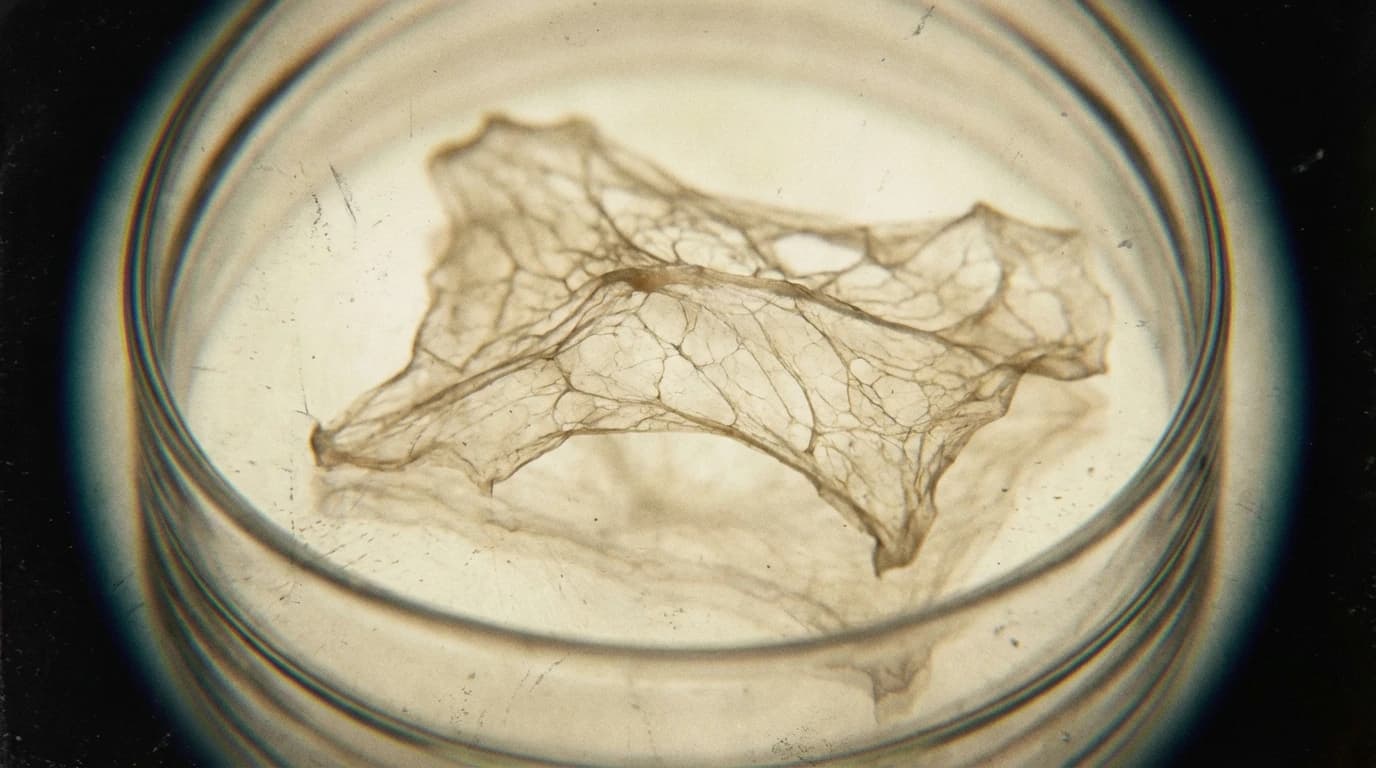

To find out, doctors rely on a bladder cancer MRI to peer inside the body. But tumours generally grow in two distinct shapes: pedunculated (like a mushroom on a stalk) or sessile (flat and broad).

Radiologists are excellent at judging the mushroom-shaped growths. However, the flat tumours create a visual bias. Human readers frequently overestimate how deeply these flat masses have invaded the muscle.

The Discovery

To fix this visual bias, researchers trained a deep learning algorithm using scans from 1,374 patients across multiple medical centres. They wanted to see if a machine could ignore the distracting shapes.

The team used a two-part AI system. First, one algorithm highlighted the exact borders of the tumour. Then, a second model analysed those borders to determine the depth of the invasion.

The researchers measured three key metrics during the tests:

- The overall accuracy of detecting muscle invasion.

- The sensitivity in spotting true positive cases.

- The specificity in ruling out false alarms.

The results were striking. When looking at the flat tumours, the human radiologists' accuracy dropped significantly. Their specificity fell to around 75 percent, meaning they flagged many false alarms.

The AI model, however, did not care about the shape. It maintained a highly accurate specificity of up to 96 percent, regardless of whether the tumour was flat or raised.

The Impact

This study suggests that AI could serve as an incredibly reliable second pair of eyes in the clinic. By ignoring the physical quirks of a tumour's shape, the algorithm provides a more objective mathematical assessment.

For patients, this could mean fewer false alarms. If a doctor overestimates the depth of a flat tumour, a patient might undergo far more aggressive treatments than necessary.

Going forward, integrating this type of deep learning into routine hospital scans may help doctors make better, fairer decisions. It offers a practical way to standardise care, ensuring that a visual illusion never dictates a patient's treatment.