ASTERIS and the Fidelity of Astronomical Image Denoising

Source PublicationScience

Primary AuthorsGuo, Zhang, Li et al.

The ASTERIS algorithm asserts it can push the detection limit of space telescope imaging deeper by a full magnitude through self-supervised learning. Historically, the search for the earliest galaxies has been a battle against the inherent noise floor of electronic detectors; astronomers have long struggled to distinguish faint signals from the random static of the universe.

The mechanics of astronomical image denoising

Current reduction pipelines typically rely on stacking exposures to average out random fluctuations. This works, but it is inefficient against noise that is not purely random. ASTERIS (Astronomical Self-supervised Transformer-based Denoising) takes a different route. It integrates information across both space and time (exposures). By training on the data itself, the model learns to identify noise structures that persist or correlate between pixels, rather than simply smoothing them over. The researchers report that this approach preserves the point spread function—the crucial shape of a light source—while stripping away the interference.

The technical divergence here is substantial. Traditional methods often treat noise as a stochastic, independent variable, much like trying to ignore individual raindrops on a window. In contrast, ASTERIS operates on the premise of correlated noise. It recognises that noise in astronomical detectors often has a relationship between neighbouring pixels and sequential exposures. Where standard processing might discard a faint signal as a fluctuation, this transformer-based model uses the spatiotemporal context to validate it. It does not merely suppress the 'rain'; it learns the pattern of the storm to see what lies behind it.

Implications for high-redshift surveys

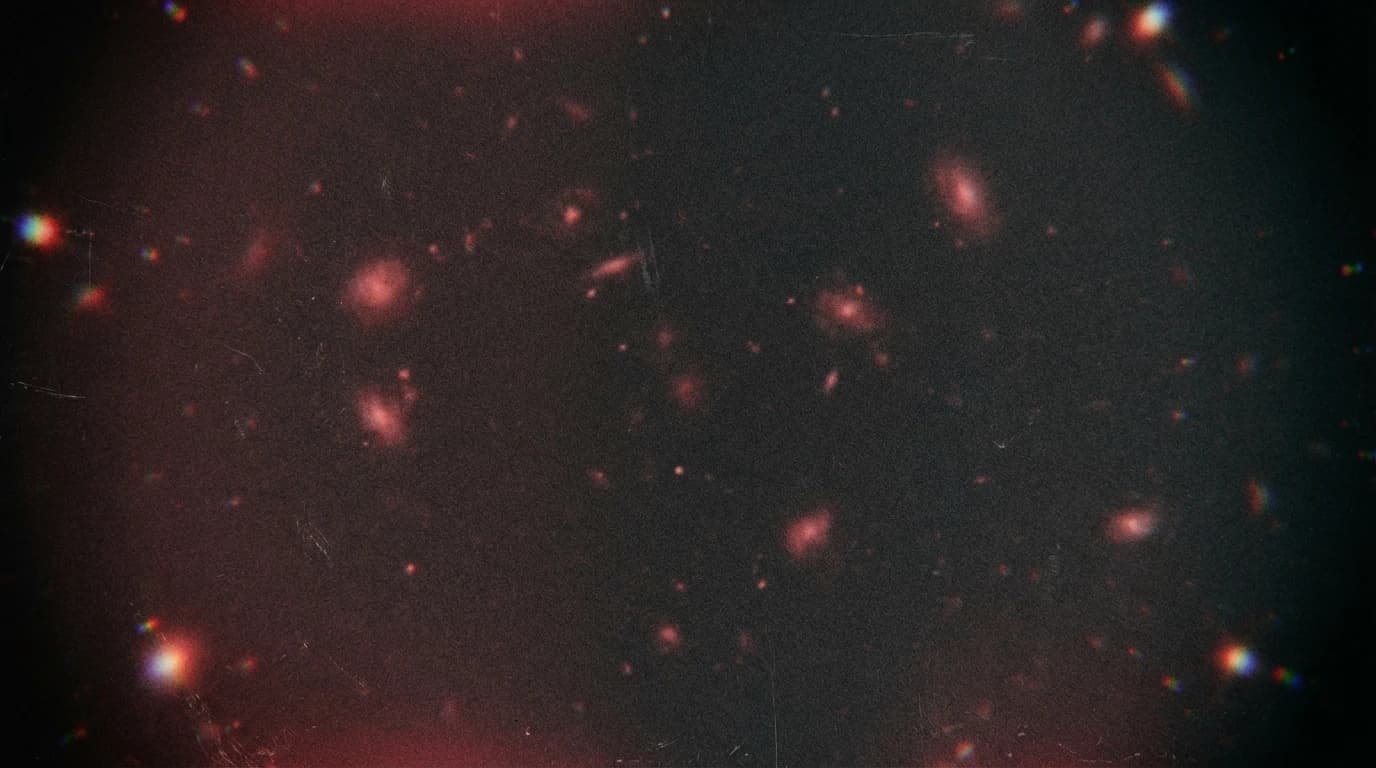

The validation phase used data from the James Webb Space Telescope (JWST) and Subaru telescope. The results are stark. When applied to deep fields, the algorithm identified three times more galaxy candidates at redshift > 9 than previous methods. Furthermore, these candidates were fainter, with a rest-frame ultraviolet luminosity 1.0 magnitude lower than what was previously detectable. This suggests that the population of early universe galaxies may be far denser than current catalogues indicate.

However, caution is necessary. Neural networks are prone to generating artefacts that mimic real structures. While the study benchmarks show high purity and photometric accuracy, the risk of 'hallucinated' features in low-signal regimes remains a concern for the wider community. Independent spectroscopic confirmation of these new candidates will be required to verify that ASTERIS is truly seeing the invisible, rather than inventing it.